‘OMIGOD’ Azure Critical Bugfix? Do It Yourself—Because Microsoft Won’t

Using OMI on Microsoft Azure? Drop everything and patch this critical vulnerability, snappily named OMIGOD. But wait! You probably don’t know whether you’re using OMI or not.

Y’see, Open Management Infrastructure (OMI) is often silently installed on Azure—as a prerequisite. And, to make matters worse, Microsoft hasn’t rolled out the patch for you—despite publishing the code a month ago. So much for the promise of ‘The Cloud.’

What a mess. In today’s SB Blogwatch, we put the “mess” into message.

Your humble blogwatcher curated these bloggy bits for your entertainment. Not to mention: Difficult Hollywood.

OMI? DIY PDQ

What’s the craic? Simon Sharwood says—“Microsoft makes fixing deadly OMIGOD flaws on Azure your job”:

Your next step”

Microsoft Azure users running Linux VMs in the … Azure cloud need to take action to protect themselves against the four “OMIGOD” bugs in the … OMI framework, because Microsoft hasn’t. … The worst is rated critical at 9.8/10 … on the Common Vulnerability Scoring System.

…

Complicating matters is that running OMI is not something Azure users actively choose. … Understandably, Microsoft’s actions – or lack thereof – have not gone down well. [And it] has kept deploying known bad versions of OMI. … The Windows giant publicly fixed the holes in its OMI source in mid-August … and only now is advising customers.

…

Your next step is therefore obvious: patch ASAP.

What, what? Sergiu Gatlan confirms the bizarre sitrep—“Microsoft asks Azure Linux admins to manually patch OMIGOD bugs”:

Microsoft has no mechanism”

The four security flaws (allowing remote code execution and privilege escalation) were found in the … OMI software agent silently installed on more than half of Azure instances … and millions of endpoints. … While working to address these bugs, Microsoft … on August 11 exposed all the details a threat actor would need to create an OMIGOD exploit.

…

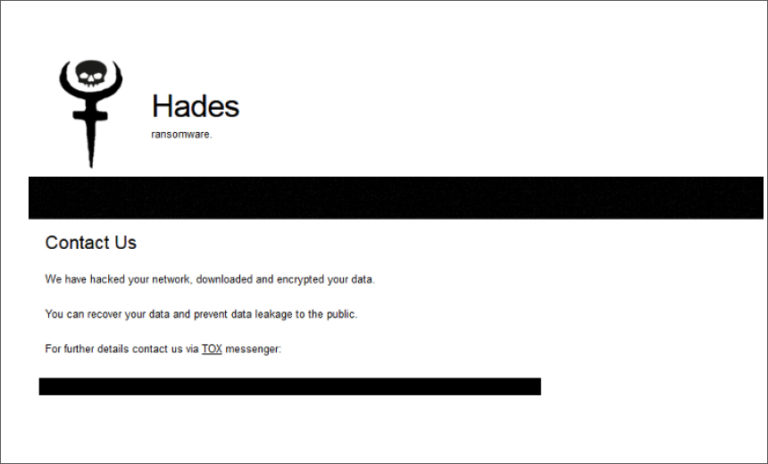

OMIGOD affects Azure VMs who use Linux management solutions with services such as Azure Automation, Azure Automatic Update, Azure Operations Management Suite (OMS), Azure Log Analytics, Azure Configuration Management, or Azure Diagnostics. [It] enables attackers to escalate privileges and execute code remotely.

…

To make things worse … Microsoft has no mechanism available to auto-update vulnerable agents.

Who found it? Wiz’s Nir Ohfeld explains, “Why the OMI Attack Surface is interesting to attackers”:

Completely invisible to customers”

The OMI agent runs as root with high privileges. Any user can communicate with it. … As a result, OMI represents a possible attack surface where a vulnerability allows external users or low privileged users to remotely execute code on target machines or escalate privileges.

…

In the scenarios where the OMI ports (5986/5985/1270) are accessible to the internet … this vulnerability can be also used by attackers to obtain initial access to a target Azure environment and then move laterally within it. Thus, an exposed HTTPS port is a holy grail for malicious attackers.

…

This is a textbook RCE vulnerability, straight from the 90’s but happening in 2021 and affecting millions of endpoints. With a single packet, an attacker can become root on a remote machine by simply removing the authentication header.

…

Problematically, this “secret” agent is both widely used … and completely invisible to customers as its usage within Azure is completely undocumented. There is no easy way for customers to know which of their VMs are running OMI, since Azure doesn’t mention OMI anywhere.

It’s a very fine bug indeed. Here’s Paul “ugly” Ducklin [You’re fired—Ed.]:

If you look after Linux servers”

Astonishingly, the bug seems to boil down to a laughably easy trick. Rather than guessing a valid authentication token to insert into a fraudulent OMI web request, you simply omit all mention of the authentication token altogether, and you’re in!

…

Many Linux-on-Azure users may be unaware that they have OMI, and therefore not even know to look out for security problems with it. That’s because the OMI software may have been installed automatically. … If you look after Linux servers, and in particular if they’re hosted on Azure, we suggest that you check.

We’ll drop everything and fix that. Right? Right??? Deputy Cartman’s not so sure:

Maybe they've expedited matters”

Hoooo boy, am I glad I’m not in the security department of the predominantly Auzre-using US defense company whose outdated practices just made me stop showing up? Maybe they’ve expedited matters and they’ll be done with their meetings on when to have the meeting to schedule the Change Authorization Board meeting.

But why isn’t it automatic? Isn’t that one of the benefits of the cloud? u/securityboxtc gets real:

Fewer ‘secret’ agents”

Microsoft’s CVE states remediation requires adding the OMI repo to the VM and manually updating it. Either Microsoft itself doesn’t trust its own update mechanisms or the only remediation is manually updating.

…

Either way, there needs to be more documentation and fewer “secret” agents. Fewer as in exactly none.

Wait. Pause. Linux on a Microsoft IaaS? This Anonymous Coward offers a colorful metaphor:

Fairly fundamental question”

Let’s start with a fairly fundamental question: … If you’re at a minimum Linux aware, why on God’s green Earth would you ever want to even go near a Microsoft product to run it on? That’s like building a bank safe out of meringue.

Why indeed? Kevin Beaumont—@GossiTheDog—emits this epic Twitter thread:

Super not confidence inspiring”

For anybody who hasn’t caught the #OMIGOD patching thing: Azure haven’t patched it for customers. They silently rolled out an agent allowed no authentication remote code execution as root, and then the fix is buried in the random CVE.

…

They’ve also failed to update their own systems in Azure to install the patched version on new VM deployments. It’s honestly jaw dropping.

…

It’s a spectacular cloud security issue, which also extends into the Azure Gov cloud service. … I don’t know how MS went public without fixing the issue first: It’s super not confidence inspiring as a customer—makes me wonder what skeletons are lurking.

And jazzylarry’s confidence is definitely not inspired:

Dies within a day or two”

I have products in Azure. I specifically love the fact that Azure’s fabric is so unstable and unreliable that even socket connections between two VMs on the same VLAN can go off into the ether.And the continuous notification emails over the past few years about various monitoring and logging services having connectivity issues (probably the same as I’m experiencing with my services) do not promote any confidence. My AWS services — whatever their issues — never had connectivity issues because of the fabric. I could run connections between two VMs all day long … with no issue. In Azure, such an exercise usually dies within a day or two, and certainly within a week.

Meanwhile, u/JasonWarren imagines OMI’s Agile storyboard:

Microsoft wanted their customers running Linux to experience what it was like running a Windows 98 machine connected directly to the internet.

And Finally:

I’m a sucker for a backlit sunset Concorde landing

NSFW warning: A couple of F-bombs.

You have been reading SB Blogwatch by Richi Jennings. Richi curates the best bloggy bits, finest forums, and weirdest websites … so you don’t have to. Hate mail may be directed to @RiCHi or [email protected]. Ask your doctor before reading. Your mileage may vary. E&OE. 30.

Image sauce: Greg Rosenke (via Unsplash)